A Pedagogical Framework for Teaching Effective LLM Use in Programming Education

Summary

A Pedagogical Framework for Teaching Effective LLM Use in Programming Education

The integration of Large Language Models (LLMs) into software development workflows has become inevitable, making it essential that computer science educators prepare students to use these tools effectively and responsibly. Recent research shows that LLMs are capable of solving the majority of assignments and exams previously used in CS1, and professional software engineers are increasingly using these tools, raising questions about whether we should be training students in their use.

Rather than viewing LLMs as threats to traditional programming education, we should embrace a structured pedagogical approach that develops students’ ability to work productively with AI tools while maintaining their problem-solving skills and critical thinking abilities.

A Three-Stage Developmental Framework

Based on emerging educational research, pedagogical best practices, and my own experience using AIs for software engineering, I propose a three-stage progression that reflects both cognitive development and increasing technical sophistication:

Stage 1: AI as Consultant (Foundational Level)

Pedagogical Goals:

- Develop effective question formulation skills

- Build critical evaluation capabilities

- Learn to iterate and refine queries

- Understand LLM limitations and biases

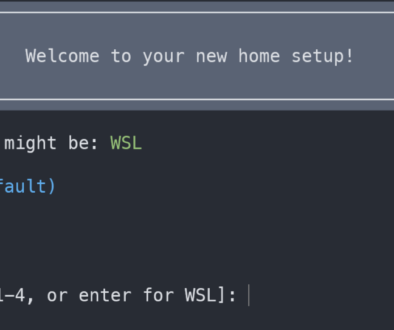

Learning Activities: Students interact with LLMs primarily through chat interfaces (ChatGPT, Claude, etc.) for research and problem-solving support. Research suggests that providing structured guidance, such as explicit instructions or prompting students to first attempt problems independently before refining solutions with LLM assistance, can reduce unproductive queries and enhance student engagement.

Essential Skills to Teach:

- Problem Decomposition: Before querying the LLM, students must define their problem, attempt a solution, and identify specific roadblocks

- Critical Evaluation: Implement the “5 whys” technique – repeatedly asking “why is this the best approach?” to validate AI responses

- Source Validation: Cross-reference AI responses with official documentation and trusted sources

Assessment Strategies:

- Query portfolios showing progression from vague to specific questions

- Reflection essays on when AI provided helpful vs. misleading information

- Compare-and-contrast exercises between AI responses and authoritative sources

Stage 2: AI as Programming Partner (Intermediate Level)

Pedagogical Goals:

- Understand code completion and suggestion systems

- Develop prompt engineering skills for code generation

- Learn to read, modify, and debug AI-generated code

- Build habits of testing and verification

Learning Activities: Students work with embedded IDE tools (GitHub Copilot, Continue, etc.) that provide real-time coding assistance. This approach allows students to focus on problem-solving and algorithms while relying on automatic code generation for implementation details.

Essential Skills to Teach:

- Contextual Prompting: Writing meaningful comments and function signatures that guide AI completion

- Code Review Skills: Systematically reading and understanding AI-generated code

- Testing Mindset: Always creating test cases to verify AI-generated solutions

- Incremental Development: Using AI for small, well-defined tasks rather than complete solutions

Scaffolding Techniques: Research on “learner-LLM co-decomposition” suggests that interactive systems that scaffold learners’ personalized construction of solution steps provide more effective learning than generic assistance. Implement:

- Structured templates for common programming patterns

- Checkpoint reviews where students explain AI-generated code

- Peer programming sessions combining human creativity with AI efficiency

Stage 3: AI as Development Team Member (Advanced Level)

Pedagogical Goals:

- Manage complex, multi-step development tasks

- Coordinate AI assistance within professional workflows

- Apply software engineering best practices to AI-assisted development

- Develop leadership and delegation skills

Learning Activities: Students use advanced AI coding agents (Claude Artifacts, GitHub Copilot Workspace) to complete substantial projects while maintaining oversight and quality control.

Essential Skills to Teach:

- Requirements Definition: Creating clear, testable specifications for AI systems

- Code Review and Quality Assurance: Implementing systematic review processes

- Documentation and Maintainability: Ensuring AI-generated code meets professional standards

- Security Awareness: Analyzing and interpreting generated code for potential security vulnerabilities

- Project Management: Breaking large tasks into manageable, AI-appropriate subtasks

Pedagogical Considerations Across All Stages

Avoiding Over-Reliance

Studies have found that students who use LLMs for assignments performed better on those problems but worse on overall assessments, with excessive dependence impairing essential independent problem-solving skills. To prevent this:

- Require AI-free coding exercises and assessments

- Implement “explain your reasoning” requirements for AI-assisted work

- Create assignments that require creative problem-solving beyond AI capabilities

Scaffolding Learning Progression

LLMs can serve as scaffolding strategies throughout the problem-solving process, helping clarify goals, facilitate higher-level cognitive activity, and promote continuous improvement through guided reflection. Effective scaffolding includes:

- Structured Progression: Move from highly guided to increasingly independent use

- Metacognitive Reflection: Regular discussions about when and why to use AI assistance

- Error Analysis: Studying and learning from AI mistakes and limitations

Assessment Adaptation

Traditional assessment methods may need revision:

- Process-Focused Evaluation: Assess problem-solving approach, not just final code

- Collaborative Assessment: Evaluate human-AI teamwork effectiveness

- Transfer Testing: Ensure students can work independently when AI is unavailable

Ethical Considerations

Address challenges around academic integrity while upholding the goal of developing competent, ethical software professionals:

- Clear policies on appropriate AI use in different contexts

- Discussion of professional ethics and AI tool limitations

- Training in attribution and intellectual property considerations

Implementation Recommendations

- Start with Fundamentals: Ensure students have basic programming competency before introducing AI tools

- Progressive Complexity: Move through stages systematically, not skipping developmental steps

- Regular Calibration: Frequently assess whether students maintain independent problem-solving abilities

- Professional Context: Connect each stage to real-world software development practices

- Continuous Adaptation: As purpose-built educational AI systems become more sophisticated and aligned with learning science principles, adjust approaches accordingly

This framework acknowledges that LLMs capable of generating accurate source code from natural-language prompts are likely to improve professional developer productivity while ensuring that students develop both the technical skills and critical thinking abilities necessary for effective human-AI collaboration in their careers.

By implementing this structured approach, CS educators can prepare students not just to use AI tools, but to use them thoughtfully, effectively, and ethically throughout their professional development.